The rapid development of digital technology is the main trend of the consumer electronics segment in recent decades. Unfortunately, high-tech tasks require complex solutions to a large number of individual complex problems. The development of digital television perfectly illustrates this problem.

In 2012, Dolby Lab introduced its revolutionary Dolby Vision technology, providing a revolutionary breakthrough in the segment of video encoding and transmission with High Dynamic Range (HDR). This technology has radically improved color rendering due to the color gamut extension with 12-bit color depth.

As known, later Samsung and other leading companies formed an association and developed the HDR10 open source standard. Unfortunately, initially it was limited to transferring only static metadata. But further, companies improved the standard to HDR10+ with support for dynamic metadata.

Parallel to the development of HDR technology, companies continued to increase the resolution of the TV matrices. As a result, at IFA 2018 Samsung and LG for the first time demonstrated TVs with support 8K, denoting the further development of this direction.

As known, 8K (Full HD Ultra or UHD2) uses 7680 x 4320 pixels (34 MPix) and Super Hi-Vision System (SHV). Of course, it dramatically increases the bandwidth requirements of the communication channel due to the sharp increase in the information volume.

Thus, innovative technologies in the segments of color rendition and matrix resolution have drastically increased the traffic requirements of the transmitted video content.

HDMI

As known, High Definition Multimedia Interface (HDMI) was developed by Hitachi, Matsushita Electric Industrial, Philips, Silicon Image, Sony, Thomson (RCA) to transfer high-resolution digital video content. In addition, HDMI provides copy protection (High Bandwidth Digital Copy Protection, HDCP).

In fact, the HDMI connector is an enhanced digital DVI connection for several devices using appropriate cables.

But HDMI is less DVI and supports multi-channel digital audio. Thus, HDMI has replaced analog connection standards, including SCART, VGA, YpbPr, RCA and S-Video.

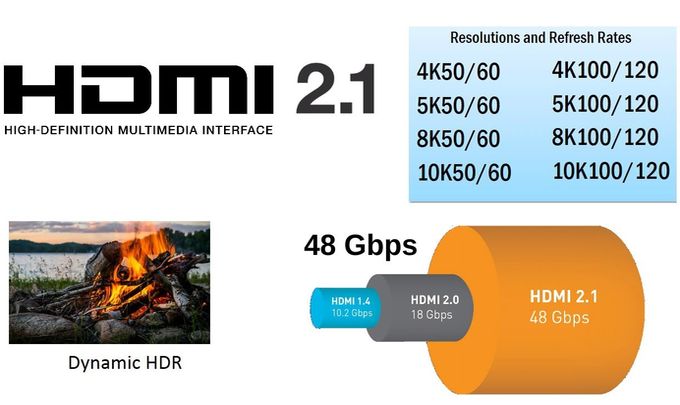

This standard uses simple and convenient labeling. Fundamentally new versions have increasing labeling, including 1, 1.2, 1.3, 1.4, 2, 2.1. The functional extensions use letters, including 1.2a, 1.3a, 1.4b, 2.0a, etc. After HDMI 1.4, the HDMI Licensing consortium developed improved HDMI 2.0a. This version supports the dynamic transfer of metadata between the source HDR content and the TV. Today, HDMI 2.1 is its latest version.

This diagram contains the main functional differences between the HDMI versions.

Unfortunately, the new HDMI versions have retained the risk of burnout when connected without de-energizing. As known, any device accumulates on its case high enough static potentials, which can reach 100 volts. Of course, disconnecting the device from the outlet eliminates this risk. But it’s hardly convenient. Companies are trying to solve this problem by adding additional protection to device schemas. But the optional adapter is more reliable. Today the market offers a wide range of various device adapters that discharge potential on the case at the time of connecting devices.

Key features

Development of HDMI 2.0a was caused by the need to transfer dynamic metadata for correct playback of HDR content. As known, dynamic metadata contains frame-by-frame information for each scene, as opposed to static metadata, which contain a single average setting for the entire video file. In fact, the technology increases the playback realism, displaying video content with original color solutions of the director and colorist for each scene. The operator makes several shots at the same time with different exposures. Then they are compared and recorded as one frame with improved quality, contrasting shadow and darkening on very bright areas of the image.

Accordingly, the maximum number of gradations for brightness levels expands the brightness range of each scene. In addition, it significantly increases the black depth due to the absence of the need for its narrowing to highlight the shadow areas of the scene.

Of course, this technology requires the transfer of more information, which is recorded as additional data (metadata).

Thus, HDR playback requires standard video (4K or 2K) and metadata for luminance of each signal. Of course, this technology is relevant only for top TV with support for HDR standard.

A model with an HDMI 2.0 port does not accept HDR metadata and plays HDR content as standard video. But HDMI 2.0a interface solves this problem. In addition, TVs without HDR support do not provide decoding of color signals with 10-bit depths or higher.

This technology also requires very high resolution, which only OLED and QLED models provide today.

Functionality

As known, HDMI 2.0 (a / b) was officially announced on September 4, 2013. Models with its support first appeared on sale in 2014. However, previous HDMI 1.2-1.4 versions also provide excellent viewing for satellite / cable HD channels and movies. HDMI 2.1 was first introduced in January 2017.

Today, HDMI 1.4 and 2.0 are the most current versions. Of course, almost fivefold increase of interface bandwidth is their main difference.

HDMI 1.4 supports almost the full potential of Full HD with a resolution of 1920 × 1080 on HD channels and 4K content at a frequency of 30 Hz. In addition, it supports almost all the most popular features, including 3D, ARC, CEC.

But, of course, HDMI 2.0 and higher provides a huge increase in resolution, interface bandwidth and frequency. Additionally, the extended functionality of HDMI 2.0 supports Full HD 120 fps, 4K 60 fps, 4: 2: 0, 25 fps color sub-sampling for 3D formats, audio HE-AAC and DRA standards.

Of course, some experts periodically discuss the importance of these aspects, arguing their position by the discrepancy between the capabilities of TVs and available content. From the story we remember similar disputes around 3D television. As you know, the high cost and complexity of producing 3D content ultimately finally closed this direction. On the other hand, expert disputes are unlikely to affect the natural evolution of technology. We can only hope that content producers will be able to download the enormous potential capacities of today’s innovative TVs. But today HDR technology and 8K resolution are designed only for elite home TV.

HDMI 2.1

The specification of the HDMI 2.1 protocol contains support for Dynamic HDR and virtual reality in the gaming Game Mode VRR modes.

It uses a new cable that delivers 48 Gbps for 10K resolution and 8K HDR.

Of course, the cable is compatible with HDMI 1.3, 1.4 and 2.0a.

HDMI 2.1 supports:

– 8K @ 60fps – 7680 x 4320 pixels, 60 Hz;

– 4K @ 120fps – 4096 x 2160 pixels (True 4K) or 3840 x 2160 pixels (4K UHD on 16: 9 screens), 120 Hz.

For comparison, HDMI 2.0a only supports 4K at 60 Hz.

Dynamic HDR protocol supports dynamic (frame-by-frame) transfer of metadata, providing the ideal depth of detail, the required brightness and contrast to expand the color gamut.

Game Mode VRR (Variable Refresh Rate) mode is similar to the G-Sync / Freesync technology in modern monitors. Variable frame rate prevents input video delay and frame-by-frame image breaks. In fact, this feature reduces the display lag.

QMS (Quick Media Switching) without delay switches the screen between different frequencies, eliminating the black screen.

EARC (Audio Return Channel) increases bandwidth via HDMI reversible audio channel to eliminate loss of audio formats, including Dolby Atmos.

In addition, HDMI 2.1 also supports advanced audio formats, including object-oriented audio.

As known, LG at CES 2019 first introduced 65C9 OLED TV with HDMI 2.1 support.

Of course, today the HDMI version affects the choice of TV.

Video offers an interview with Brad Bramy from HDMI Licensing Administrator.Inc at CES 2019 about the possibilities and prospects of HDMI technology.