In recent years, development in the consumer electronics segment has been characterized by a pronounced change in trends. Due to redundancy, further improvement of component specifications often does not provide an adequate improvement in the model. In addition, their further improvement is often very expensive. A list of such examples includes 8K TVs (limited 8K content) or ultra bright TV panels (peak brightness of Samsung Neo QLED QN90A 4K exceeds 1,500 nits that is important only for practically inaccessible HDR-content).

The situation is similar in other segments. For example, the new $ 450 Google Pixel 6a uses Google Tenzor, which is confidently among the best on the market.

But the SoCs of even budget models today provide excess performance.

Of course, industry leaders monitor these trends by adjusting their strategy. Perhaps the development of Google Tensor is due to this factor.

A few years ago, Google Gboard focused its efforts on improving heterogeneous compute and workload efficiency through machine learning (ML). In the past, ML algorithms used only cloud processing, which has virtually unlimited storage space for huge databases. Unfortunately, it has several significant cons, including slow response time, privacy concerns and bandwidth limitations. Rapidly developing direction called ‘Federated learning’ uses on-device and cloud-based ML and can be positioned as a hybrid. The unique Google Tensor configuration (2+2+4 instead of the traditional 1+3+4) is optimized for thus technology.

The ML has already significantly expanded the phone functionality. This list includes, for example, Smart Reply, Recorder App, Live Caption, HDRNet algorithm, Single Take, Adaptive Brightness (auto adjustment of screen brightness), etc. Most of them almost instantly become popular among consumers, proving their relevance.

Given the great prospects for the further development of ML-apps, Google Tensor SoC quite reasonably arouses increased interest.

Machine Learning (ML)

In general, ML is one of the areas of artificial intelligence (AI) based on using the data arrays to form control algorithms. Analyzing solutions to many similar problems, information systems independently identify patterns and offer solutions.

Perhaps a brief illustration of the use of ML will be of interest to some. For example, in text algorithms ML works as follows.

Text snippet can be meaningful or nonsense, such as ‘Michael likes to fish, John prefers to ride a bike, Smith enjoys reading books, but they are all passionate football fans’ or ‘kcxhIkGnvjdhPhfdRWjshfdldkjOPqS’. The ML – algorithm should distinguish them from each other. By analogy with the algorithms of anti-virus programs, meaningful text can be called ‘clean’, and rubbish – ‘malicious’. In fact, the developer must formalize the difference between them.

ML solves this problem with a database containing the frequency of all letter combinations in normal text. In our example:

– ea – 2 times;

– en -2 times;

– ik – 1 time, etc.

As a result, the algorithm remembers that, for example, the probability of ‘en’ in the text is twice vs ‘ik’. Next, the program analyzes the ‘plausibility’ of the line or paragraph by multiplying the number of all letter combinations. The resulting value характеризует the probability of a ‘clean’ / ‘malicious’ text. Its small value corresponds to a suspiciously large number of rare letter combinations, which is typical for ‘malicious’ text. The mean value and critical deviations are calculated and stored by the program using training examples.

But, of course, text algorithms are a very small part of ML’s capabilities.

Google Tensor SoC

Of course, the announced second-generation Google Tensor SoC (code-named GS201) could be one of this year’s sensations.

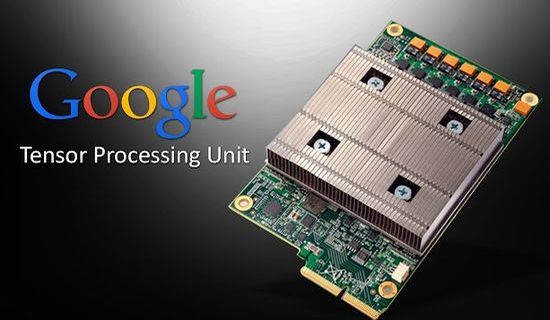

Tensor SoC (2021) with Google’s Tensor Processing Unit (TPU) is focused on enhanced imaging with machine learning.

As known, Google actively collaborates with Samsung to co-develop and fabricate the Tensor SoC. Maybe that’s why its GPU, modem and even some architectural aspects, for example, clock and power management, are similar to Exynos 2100.

Own SoC allowed the company to abandon additional hardware, including the Pixel Visual Core and Titan M security chip.

Perhaps the new SoC did not strike the imagination in terms of performance gain, but the company honestly warned that this aspect was secondary. The developers have focused their efforts on improving performance when solving problems such as real-time language translation for captions, text-to-speech without an internet connection, image processing and other machine learning-based capabilities with integrated TPU. As a result, it provided 4K@60fps for the first time for Google’s HDRNet algorithm.

In fact, TPU improved ML performance without a cloud connection. It has reduced latency and battery consumption.

Moreover, the Titan M2 security core stores and processes sensitive information (biometric cryptography, etc), further enhancing security.

In general, flagship Snapdragon and Exynos from Qualcomm and Samsung have received a very formidable competitor.

Tests

Autumn leaks were not entirely accurate. But today a more accurate analysis is already available.

Tensor vs Snapdragon 888 vs Exynos 2100 (all 5 nm, 4G LTE modem and LPDDR5 RAM)

CPU

– 2x Arm Cortex-X1 (2.80GHz) + 2x Cortex-A76 (2.25GHz) + 4x Cortex-A55 (1.80GHz) vs

– 1x Arm Cortex-X1 (2.84GHz) + 3x Cortex-A78 (2.4GHz) + 4x Cortex-A55 (1.8GHz) vs

– 1x Arm Cortex-X1 (2.90GHz) + 3x Cortex-A78 (2.8GHz) + 4x Cortex-A55 (2.2GHz);

GPU

– Arm Mali-G78 MP20 vs Adreno 660 vs Arm Mali-G78 MP14;

ML

– Tensor Processing Unit vs Hexagon 780 DSP vs Triple NPU + DSP.

Two high-performance Cortex-X1 cores at the expense of performance and efficiency of the middle core is the main difference of Tenzor. Today, Tensor uses unique 2+2+4 (big, middle, little) configuration instead of the traditional (1+3+4). According to the developers, it’s better for more demanding workloads and machine learning tasks.

Using Cortex-A76 instead of the better performing A77 and A78 may seem controversial. But it’s probably for good reasons.

Test

Tensor vs Snapdragon 888 vs Exynos 2100

– CPU – Geekbench 5 (Single Core/Multi Core) – 1014/2679 vs 1100/3223 vs 1109/3620.

For comparison, max (Apple A15 Bionic) – 1722/4768, min (Exynos 990) – 914/2791;

– GPU (3DMark) – 6621 vs 5687 vs 5774.

For comparison, max (8 Gen 1 in Samsung Galaxy S22 Ultra) – 9841, min (Dimensity 1200 AI) – 4160;

– Speed Test G (CPU/Mixed/GPU) – 38/20/35 vs 33/18/24 vs 33/20/33.

For comparison, max – Snapdragon 888, min (Snapdragon 765G) – 57/30/54.

Formally, Qualcomm and Samsung are slightly ahead of Tensor, but given the clearly excessive performance of all SoCs, this aspect is unimportant.

The Tensor’s GPU with 6 extra cores shows higher performance vs Exynos 2100, but some stress-tests are accompanied by thermal throttling.

Conclusion

To a certain extent, Google Tensor marked a new era in the phone development. Besides performance, the developers have focused their efforts on optimization of SoC taking into account AI and ML. These tools should enable further functionality enhancements by improving the efficiency of heterogeneous compute and workload.

Today, even the budget segment components often have excessive performance. For example, the new $ 450 Google Pixel 6a uses Google Tenzor, which is confidently among the best on the market.

At the same time, the list of new popular apps has expanded over the past few years with Smart Reply, Recorder App, Live Caption, HDRNet algorithm, Single Take, etc.

Preliminarily, a next-generation Tensor SoC with code-named GS201 will be built on a 4nm process and use a Samsung Exynos 5300 5G modem that has yet to be announced. Maybe, it will have, for example, Cortex-X2, Cortex-A710, Cortex-A510 and improved Tensor AI hardware instead of Cortex-X1, A76, A55. But anyway, it will be optimized for ML.

This video introduces the next-generation Tensor SoC.