As known, component improvement is a traditional way to improve any device. Unfortunately, its potential is often objectively limited. At a certain stage, further improvement becomes too expensive, and the added customer value does not compensate for the increase in costs.

For example, such situation has developed in the TVs segment. In particular, 8K models are limited by content scarcity, ultra bright TV panels are important only for practically inaccessible HDR-content, the value for money of huge TVs gradually loses to ultra short throw projectors.

In all these examples, the expediency of further components improvement is limited by objective factors.

Similar situation in phone segment. For example, the announced $ 450 Google Pixel 6a uses Google Tenzor SoC, which is confidently among the best on the market.

But its performance is already excessive for traditional tasks, and its further increase is meaningless.

Of course, industry leaders respond adequately to these processes by promptly adjusting their strategy.

Google was one of the first to grasp the need for a shift in emphasis. The global giant has focused its efforts on expanding phone functionality through machine learning (ML)-based apps, modes, functions.

To a large extent, the Google Tensor SoC can be positioned as the first ML-optimized mobile chipset.

ML-phone evolution

The evolution of this direction began in the mid-2010s with competition for camera image quality. Some companies have begun to actively develop SoC’s efficiency for ML-tasks, trying to improve the image quality by improving the processing quality. Already by 2017, Apple, Google, Qualcomm, and Huawei introduced SoCs with ML-dedicated accelerators. These algorithms have significantly improved image quality in terms of noise reduction, dynamic range and low-light shooting, demonstrating the great promise of this technology.

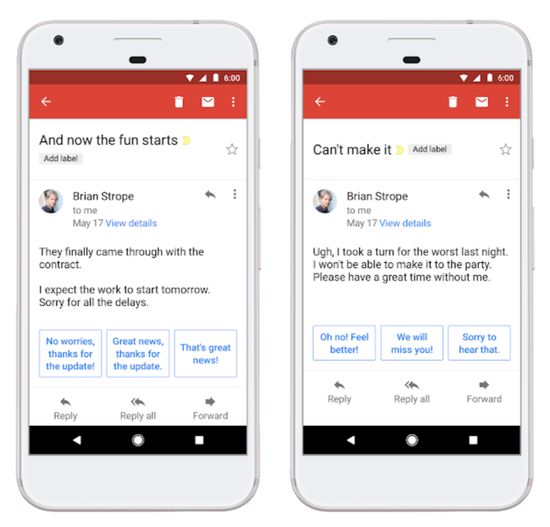

Today, companies mainly develop MP-apps with the help of pre-trained models, which are generated on powerful hardware. Their high performance, for example, ensures almost instantaneous generation of a contextual Smart Reply on Android.

The developers offer many universal models to all phones, but with a very low level of personalization. Also, they cannot expand databases in real time.

Transferring the training process from the cloud to individual phone promises a significant increase in efficiency. For example, developers will be able to adapt the tips of a keyboard app to your typing style. But, of course, the huge difference in the performance of these platforms will require considerable effort from companies. Nevertheless, the general trend of further development in this segment has already been formed.

Today Google Gboard utilizes a hybrid ‘federated learning’ technology using on-device and cloud-based training. Perhaps the increase in the on-device part will become the main way for the ML development. But the main part of the model will require initial training on powerful hardware.

The adaptive brightness feature perfectly illustrates the the possibilities of this technology. ML-app monitors user interaction with the screen brightness slider and adjusts the model settings according to his preferences. As a result, in just a week, Android ‘trains’ the phone to choose the optimal brightness for the user.

Most popular MP-apps

Today it’s successfully developing. For example, using ML, Single Take automatically creates an album from a short video clip.

In addition to text prediction and photography, companies are actively developing ML for voice recognition and computer vision.

For example, Google has developed an instant camera translation feature that displays the translation of foreign text in real time. Perhaps its translation accuracy is inferior to other online counterparts, but it’s very convenient for tourists with a limited data plan.

ML is also very effective in augmented reality (AR). In particular, high-fidelity body tracking is ideal for workout tracking and sign language interpretation instead. Today, a simplified version of this option is implemented in the LG G8.

In fact, Air Motion from the South Korean giant provides gesture-based control, supporting quick transition between apps, shooting, etc. It uses the Time of Flight sensor and infrared light within the Z Camera system.

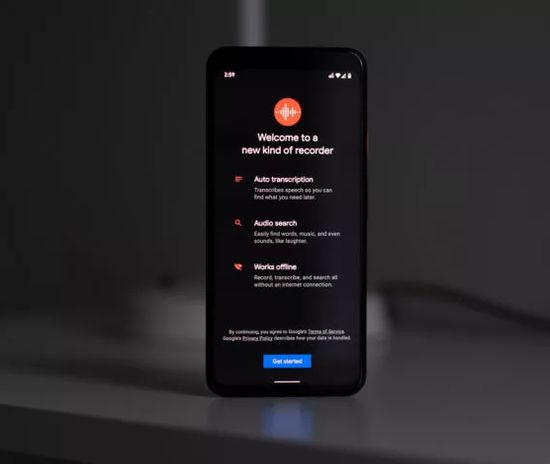

The voice recognition and dictation has been successfully developing for more than a decade. But a fully offline real-time mode was implemented only in 2019 in the Google Recorder app with the help of ML.

Moreover, the app saves the transcription as editable text, which is very convenient for journalists or students.

MP also greatr works in the Live Caption feature, which was first implemented on Pixel 4.

As known, it automatically generates captions for any media content, and is very handy to decipher, for example, fuzzy speech against loud noises.

Conclusion

Having reached a reasonable maximum in terms of smartphones specs, companies are increasingly developing their functionality with MP-apps. Probably, Google Tensor can be positioned as the first MP-SoC with a unique configuration (2+2+4) instead of the traditional one (1+3+4). But the next-gen Google Tensor processor has already been announced for the fall. New Google Pixel 7 series will already use the new SoC.

Given the success of last year’s chipset, the company is unlikely to make drastic changes in the new version.

Given the vast experience of the global giant in the development of apps, Google claims to dominate in MP-apps segment. But, of course, Apple, Samsung or Huawei are unlikely to peacefully step aside. However, the consumer market can only welcome competition between the giants.

This video introduces Live Caption on Android.