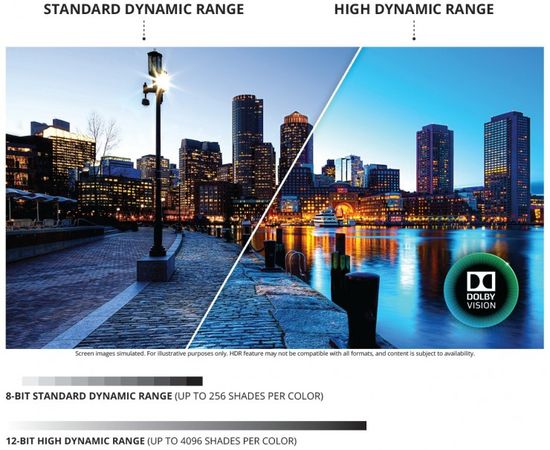

Of course, the HDR standard is the most popular trend in the development of modern television. This technology was developed by Dolby Lab in 2012 under the name Dolby Vision for premium television. Its first demonstration at CES 2014 made a huge impression on the most imperturbable experts.

As known, Dolby Visio uses a 12-bit color instead of the traditional 8-bit color, radically increasing its depth. In addition, HDR technology uses the enhanced contrast of modern TVs. OLED matrix provides it with the help of minimum black due to the lack of LED backlight. LED LCD TVs provide improved contrast due to high peak brightness and effective FALD (Full Array Local Dimming) backlighting.

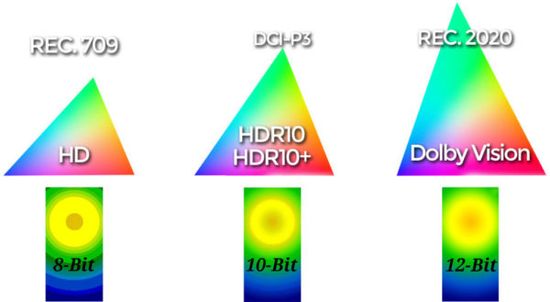

A few years later, LG, Samsung, Sharp, Sony and Vizio joined forces and created a 10-bit open HDR10 standard. After a few more years, companies introduced HDR10+ with support for dynamic metadata. Thus, modern TVs use 8-bit Rec.709 (HD), 10-bit DCI-P3 (HDR10 and HDR10+) and 12-bit Rec.2020 (Dolby Vision) standards.

As a result, the simultaneous use of several standards provokes interest in color rendering coding technology.

Сolor rendering coding

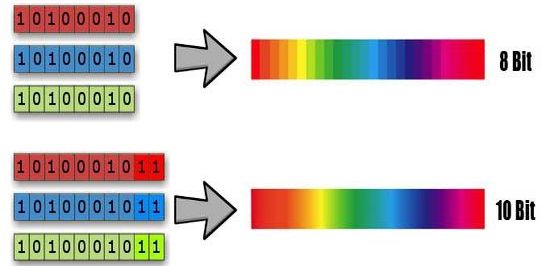

As known, the bit is used as a unit of information storage in the form of “1” or “0”. Accordingly, one bit can control a pixel for completely black or completely white.

Several bits provide information for the formation of colors and their shades. For example, 2 bits form 4 values, including 00, 01, 10 and 11. 3 bits increase the number of possible combinations to 8, including 000, 001, 010, 011, 100, 101, 110 and 111. Thus, the number of combinations in binary code is 2, raised to the power of the number of bits. For example, the 8-bit standard reaches 256 (2, raised to the power of 8).

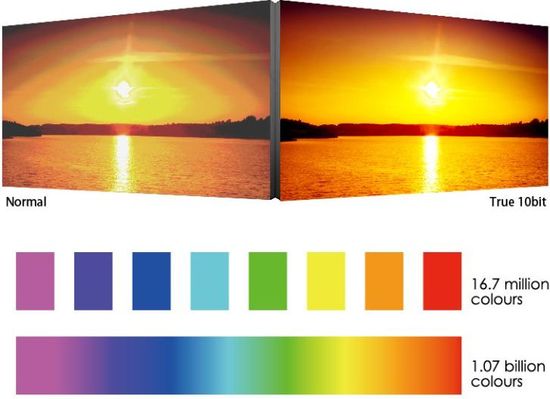

In general, the bit depth determines the gradation of changes in a certain range of values. The diagram visualizes the difference of 8-bit and 10-bit encoding.

The color image uses red, green and blue pixels. Each of them is treated as a separate channel. Sometimes, for marketing purposes, companies specify the number of bits is not entirely correct. For example, the 8-bit display mode is classified as 24-bit “True Color” (3 channel colors, 8 bits each, 3 x 8 = 24). In accordance with this methodology, “Deep Color” (30–48 bits) actually provides from 10 to 16 bits for each color.

Matrix

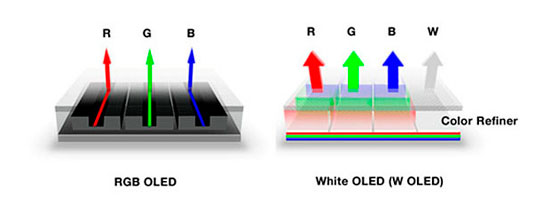

Most modern TVs have an 8-bit matrix that uses the RGB system. Red, green and blue colors create a full range of colors and their shades. Of course, many companies experiment in this direction. For example, LG uses WRGB system with additional white in its OLED models.

Sharp adds yellow color (RGBY).

But most companies continue to use the traditional RGB system, each color of which forms up to 256 sub-pixels for a full pixel with a color depth of 8 bits.

The specification of most TVs includes information about the color depth or the number of colors displayed. This value depends on the matrix and is calculated as follows.

For example, an 8-bit matrix can display 2 x 2 x 2 x 2 x 2 x 2 x 2 x 2 = 256 color shades for each of the three colors. Then, the number of possible combinations will be 256 x 256 x 256 = 16,777,216 or 16.7 million colors.

Accordingly, a 10 bit matrix displays 2 x 2 x 2 x 2 x 2 x 2 x 2 x 2 x 2 x 2 = 1024. Next, 1024 x 1024 x 1024 = 1.07 billion colors for each pixel. Of course, this value affects the realism of the displayed image.

FRC (Frame Rate Control) technology

Of course, the color depth directly affects the image quality and, accordingly, the price of TV. Therefore, companies have developed technology to increase color depth.

8-bit matrix displays the high-quality 10-bit content with lossy. Dithering or Frame rate control (FRC) technology partially solves this problem, forming an illusion of perception of the intermediate color shade. In this case, the TV algorithms display the missing colors with available palette, smoothing color gradients. In addition, engineers have improved this technology with the help of pixel blinking. This mode improves color perception, further reducing the visualization of gradients. Today, many mid-range models use improved A-FRC technology called “8bit + A-FRC”. The quality of their color rendering is inferior to 10-bit matrix, but exceeds 8-bit model.

Today, for example, LG uses 10-bit matrix only in 9-series and OLED models. TVs 7 and 8 series use 8-bit matrix and FRC technology.

Of course, today support for HDR is one of the important criteria for choosing a TV.

The video illustrates the differences between 8 bit and 10 bit color grading.

P.S.

Of course, the choice of the optimal model primarily depends on the image quality. It, in turn, significantly depends on the number of shades that the TV can reproduce, and the color gamut, which determines what colors the screen can display. In fact, the number of colors characterizes the number of gradations in the color gamut. Accordingly, the color gamut extension without changing the number of colors (the number of bits per channel) will be accompanied by the rendering of transition lines from one shade to another.

The specs are tightly bound by the standards. In 1931 the International Commission on Illumination (CIE) adopted the “CIE 1931” standard for working with color space. It’s based on a mathematical model and includes all human-perceived colors. In fact, this is the maximum of our vision, which corresponds to a color gamut of 100% CIE.

But displaying a photo or video on different devices requires a unified color setting. Different color gamut standards solve this problem, characterizing the colors that the device can process. At the same time, for example, the coverage of the sRGB and Rec.709 standards is almost identical, but sRGB is intended for images, and Rec.709 is for video.

Standards:

– Rec. 2020 (Ultra HD video) – 75.8% from CIE 1931;

– DCI-P3 (digital cinemas) – 45.5% of CIE 1931.

– Rec. 709 (Full HD video) – 35.9% from CIE 1931;

– Adobe RGB (image) – 52.1% from CIE 1931;

– sRGB (image) – 35% of the CIE 1931 space.

Matrix bitness:

– Rec. 709 (HD) – 8-bit matrices;

– DCI-P3 (HDR10 and HDR10+) – 8bit + FRS;

– Rec. 2020 (Dolby Vision) – 12-bit.

Pingback: LG QNED MiniLED TVs 2021 Review - The Appliances Reviews

Pingback: Samsung Neo QLED Mini-LED vs LG QNED Mini-LED vs TCL OD-Zero Mini-LED at CES 2021 Review - The Appliances Reviews

Pingback: ViewSonic PRO7827HD projector - The Appliances Reviews

Pingback: HDR technology - The Appliances Reviews