Of course, the rapid development of digital technology has radically changed the consumer electronics segment. Tough competition has forced almost all the leaders in this segment to actively develop and implement their own innovations based on digital technologies. In addition, the radically increased processors performance and efficiency of processing algorithms have provided great opportunities for developers. As a result, this unconditionally positive trend provoked confusion in terms. In addition, the marketing factor often exacerbates this situation.

Introduction

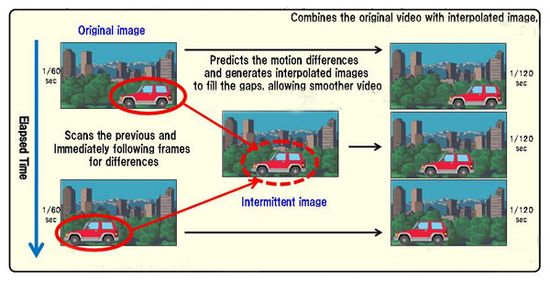

Frame interpolation and dynamic contrast ratio well illustrate this situation. As known, frame interpolation uses intermediate frame generation algorithms to smooth the image in high-dynamic scenes.

As a result, almost all the leading companies have developed their own technologies and indexes, including CMR (Clear Motion Rate) from Samsung, MCI (Motion Clarity Index) from LG, MXR (Motionflow XR) from Sony, AMR (Active Motion Rate) from Toshiba and PMR (Perfect Motion Rate) from Philips. Further, companies began to indicate in the specs of their models the frequencies 400 Hz, 600 Hz, etc. Of course, these values are calculated taking into account interpolation and do not correspond to the real refresh rate.

The contrast ratio also fell into a similar situation. As known, the contrast ratio is the ratio of maximum brightness to minimum. Modern algorithms very effectively control the brightness depending on the content in the frame, providing a huge dynamic contrast. Today, companies often specify in the specs of their models 600 000: 1 and even 1 000 000:1. Of course, dynamic contrast ratio is fundamentally different from traditional ANSI (American national standards institute) contrast.

As a result, the specs of two TVs of approximately one class may contain 100 Hz or 600 Hz for the frequency and 1300: 1 or 600 000: 1 for contrast. Of course, such a huge difference puzzles many users. Eventually, many experts began to use the term “native”, which characterizes the real technical value without considering processing algorithms.

Resolution is not an exception in the confusion of concepts. Probably, many people encountered in the title of some models a not very clear combination of the terms “4K Ultra High Definition”.

4K and Ultra High Definition resolution

Of course, it causes reasonable bewilderment, since it simultaneously contains two synonyms. Therefore, a discussion of this aspect may be useful for a more adequate choosing the optimal model.

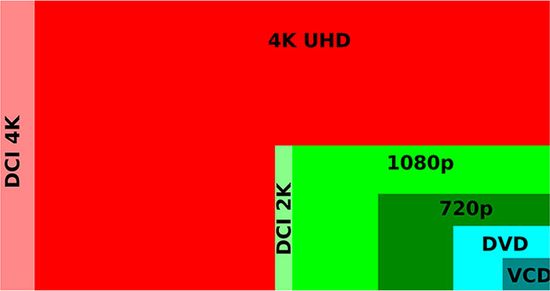

The term 4k appeared in the late 90s of the last century, when film distribution began to use a digital video image format. It applies to all standards of digital video, in which the number of pixels on the screen horizontally is approximately 4000. Thus, 4k is 4000, where “k” is short for thousand.

Ultra HD is a marketing term, which stands for Ultra High-Definition. It was introduced by the Consumer Electronics Associations (CEA) to make it easier for consumers to understand new technical standards. Together with Ultra HD, the association also allowed the use of other marketing abbreviations (synonyms), including UHD, UH-Definition or Ultra High-Definition.

In fact, their combination in the same title means that the screen has 4000 pixels horizontally and provides UHD quality, which includes resolution, color depth, frequency, etc.

4K

Today the market offers a huge number of different devices with 4K support. However, they may differ significantly from each other. For example, a 4K (usually 3840 × 2160) signal has, besides the resolution, many other characteristics, including chroma sampling rate (image color quality), color bit depth (coding and color depth), frame-rate, etc. Of course, they all significantly affect the visualization and perception of the image.

Today 4K format includes 2 standard resolutions (for comparison, HD includes four):

– 3840 × 2160 – this value was chosen by SMPTE (Society of Motion Picture and Television Engineers);

– 4096 × 2160 – this resolution was chosen by the DCI (Digital Cinema Initiative) to show 4K films in cinemas. Its support requires a DCI chip for film projectors.

But really, only the aspect ratio visualizes this difference. In particular, DCI uses 1.9: 1 aspect ratio, and SMPTE uses 16: 9.

Specs of modern models often contain “native 4K”, “4K compatible”, “4K capable” or even “4K ready”. Of course, many ask the obvious question about their differences. They depend on the technology of increasing the resolution. Today, companies mainly use 2 ways.

4K to Full HD conversion

This technology first artificially reduces the original 4K image resolution and only then displays it. For example, Samsung uses the Remastering Engine technology in many of its televisions, positioning them as 4K models. But in fact, they convert 4K video to Full HD.

Full HD screen with this resolution has 1920 × 1080 = 2,073,600 pixels. UHD screen with this resolution has 3840 × 2160 = 8 251 400 pixels, which is more than 4 times more. Given the density of pixels, information is transmitted not for one pixel, but for 4 pixels. Of course, such an image has excellent quality, but it objectively corresponds only to Full HD.

Usually, the instruction of such models contains information that 4K is not the native resolution of this device, and that it uses 4K to Full HD conversion.

Today, the market for professional audiovisual technology already offers projectors with a native resolution of 2560 × 1600, capable of displaying an image in 4K resolution. Some companies designate the resolution of their projectors as follows:

– Resolution 3.840 x 2,400 (4K UHD) / 2,560 x 1,1600 (WQXGA native).

Moreover, the native resolution follows 4K. Of course, many rightly consider such information incorrect.

Wobulation or pixel shifting or 4K Enhancement Technology

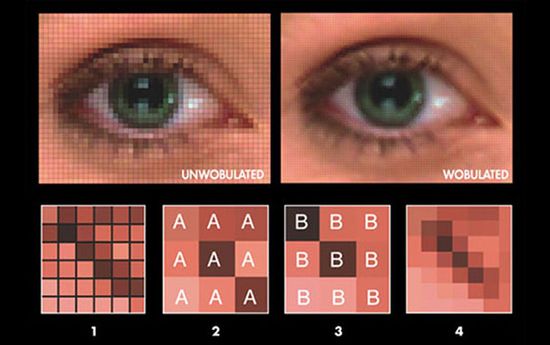

This more promising and “honest” technology uses the overlay pixels method. Initially, the algorithm creates many subgroups of data. Then, an optical mechanism for shifting an image (for example, a crystal of an LCD display) shifts the projected image of each subgroup by a part of a pixel (for example, by half or one third). The high speed of the sequence of projections creates the illusion of their overlap. In fact, the viewer perceives the image in 4K resolution, which is displayed by individual pixels on LCD crystals or DLP mirrors.

In fact, wobulation increases the image resolution by slightly reducing its sharpness.

As known, the sharpness is a subjective characteristic of an image and determines the degree of distinguishability of the its fine detail. Of course, people differently perceive this value, including, depending on the viewing conditions and content. But most viewers with normal vision poorly perceived image with low detail.

The resolution characterizes the minimum size of distinguishable parts, which depends on the pixel size on the matrix and the content resolution. Of course, this value is less subjective and can be accurately measured. But in general, these two characteristics are very similar from a physical point of view, although they have different interpretations. In fact, wobulation or pixel shifting increases the resolution (or detail) of the image, but a simultaneous decrease in sharpness partially reduces the efficiency of this algorithm.

Conclusion

Can the human eye notice the difference between native 4K and wobulation technology? While the experts have not formulated a clear opinion. But, the pixels is practically not noticeable even from a very short distance.

Today, companies differently call this technology in the specs of their models. For example, JVC uses the term “e-shift”. But most importantly, devices with this technology are cheaper compared to models with a native 4K resolution by about 50%.

This video perfectly illustrates the Pixel shift operation principle.

Pingback: Epson Home Cinema 5040UB (EH-TW9300) vs Epson 4010 (EH-TW7400) - The Appliances Reviews